How iFood Enhanced Feature Management to Combat Fraud

In this publication, we present how iFood benefited from using a Features Platform, which in addition to simplifying feature management, also provided a robust, efficient infrastructure and mitigated latency problems previously faced in fraud detection and combat.

The digital revolution has brought challenges and opportunities to the world of food delivery. Emerging as a leading Food Tech in Latin America, iFood has constantly adapted to growing demand. But with more users and partnerships came enhanced responsibility: ensuring an impeccable user experience.

Fraud prevention is crucial in the Food Tech ecosystem. As a company that processes millions of orders daily, iFood has become an attractive target for fraudsters, especially those conducting tests with stolen or generated cards. Fraud attempts not only harm the platform’s financial integrity but also compromise the trust of customers and partner establishments. In response to this persistent threat, iFood has developed a strategic approach that combines traditional security mechanisms with advanced artificial intelligence models. These models are trained to recognize suspicious patterns and evaluate, among various parameters, the risk of future transaction chargebacks. Thus, each order is not just a transaction, but also a continuous verification of legitimacy, ensuring the safety of everyone involved in the process.

However, managing these models is a very complex task. One of the main challenges faced is keeping model characteristics (features) updated and ensuring integrity and low latency in data access. And this is where iFood’s innovation comes in: the Features Platform. This solution was designed to simplify the lives of Data Scientists. While they define ‘what’, the platform manages ‘how’, ensuring data coherence and promoting effective collaboration between teams. Supported by AWS infrastructure, the Features Platform not only addresses the intrinsic challenges of model management but also allows iFood’s team to focus on what they do best: offering a superior experience to their customers.

A Features Platform is, essentially, a centralized system that facilitates the management and utilization of ML features throughout the organization. Features are the individual inputs (or variables) that feed an ML model. Once these features are created, they need to be stored, managed, and retrieved efficiently for model training and inference.

Why is it crucial?

1. Reusability: With a Features Platform, data scientists and ML engineers don’t need to recreate or reprocess features every time they develop a new model. Existing features can be accessed and shared by different models, saving time and resources.

2. Consistency: Ensures that all teams are using the same definitions and transformations of features. This is vital to ensure that insights and predictions generated by different models are consistent and reliable.

3. Efficiency: Reduces complexity when managing the lifecycle of features, from creation to deployment. It also reduces latency, as the features necessary for real-time inference are readily available.

4. Scalability: The platform is optimized to handle high data volumes, ensuring effective feature construction.

5. Monitoring/Governance: Offers complete transparency in feature calculation, recording sources, filters, aggregation and maintaining a history of changes.

Proposed solution

Fraud detection models are vital, particularly in scenarios where response time is essential, as is the case with iFood. The challenge lies not only in operating a critical model in real time, but also in the demands imposed on feature productization, an aspect that, by itself, constitutes a major engineering challenge. The requirement is for fast and consistent responses, which drove iFood to develop the Features Platform.

Before the platform, the average response time of the fraud system was 250 ms. With the platform implementation, this time was reduced to 50 ms, evidencing a significant improvement in system response. The platform not only simplified the creation and management of features, but also ensured consistency, addressing the problem of differences between feature logic executed in a training pipeline and feature logic for inference (feature skew), a critical issue in productizing fraud detection models.

Developed primarily to process features in real time, the Features Platform integrates tools like Apache Spark, Apache Kafka, Delta Lake and Redis, this combination ensures maximum performance, regardless of data volume, establishing a new standard of efficiency and effectiveness in fraud detection and prevention. This robust infrastructure not only accelerates the detection of fraudulent activities, but also provides a more agile and flexible environment for data scientists, facilitating continuous innovation and improvement of fraud models.

How does the Features Platform drive innovation at iFood?

The routine of a data science team is full of complex steps, for example, managing data pipelines, feature engineering, monitoring models in real time, etc. One of the most laborious phases is feature operationalization; establishing a robust infrastructure to create and test features is undoubtedly a challenge. This is where the Features Platform excels, providing agility for teams. The platform not only optimizes processes, but also brings with it a series of benefits, such as:

– Agility in Model Development: The Features Platform allows iFood’s data scientists to focus on experimenting and optimizing models, instead of spending time on feature productization.

– Continuous Integration and Delivery (CI/CD) for Features: The descriptive nature of features facilitates continuous integration and delivery, allowing updates to be implemented more quickly and smoothly.

– Improved Collaboration: With a centralized repository, teams can collaborate better, sharing insights and improvements in features.

– Fast and Reliable Availability: The platform not only stores features, but also ensures they are delivered effectively and in real time to production models. This is crucial for low-latency models.

How does iFood’s Features Platform work?

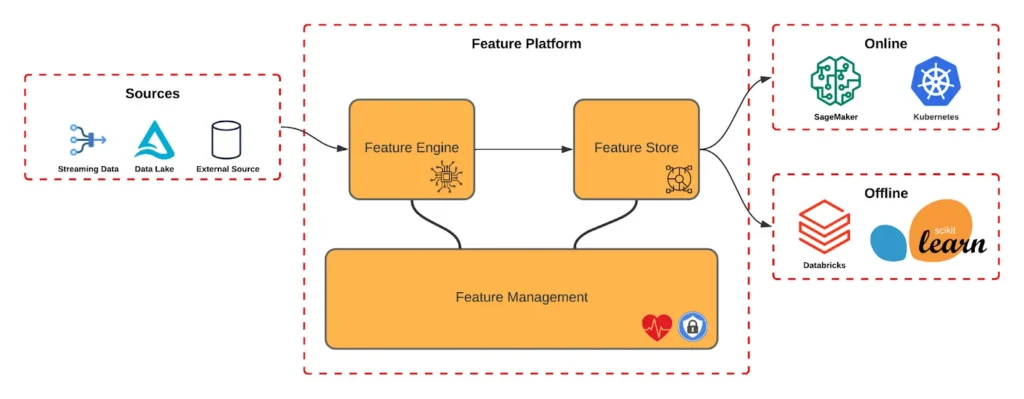

The features platform is composed of three main abstractions: the Feature Engine, the Feature Store and the Feature Management, as can be observed in Image 1. Each layer is responsible for ensuring the necessary steps to deliver features as quickly as possible while ensuring security, resilience and simplicity.

Feature Engine

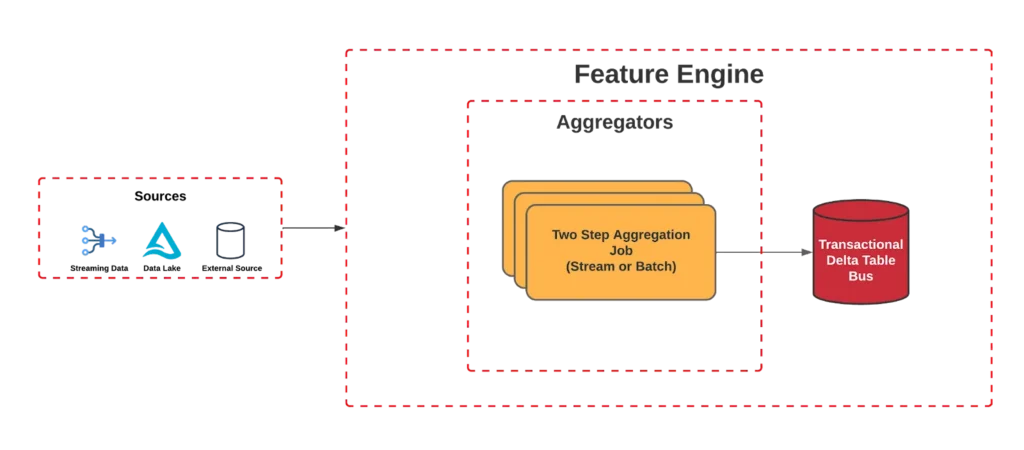

This layer consists of the abstraction of the entire platform aggregation engine. The aggregation process is fundamental to transform large volumes of data into meaningful information that can be used for analysis, reports and decision making.

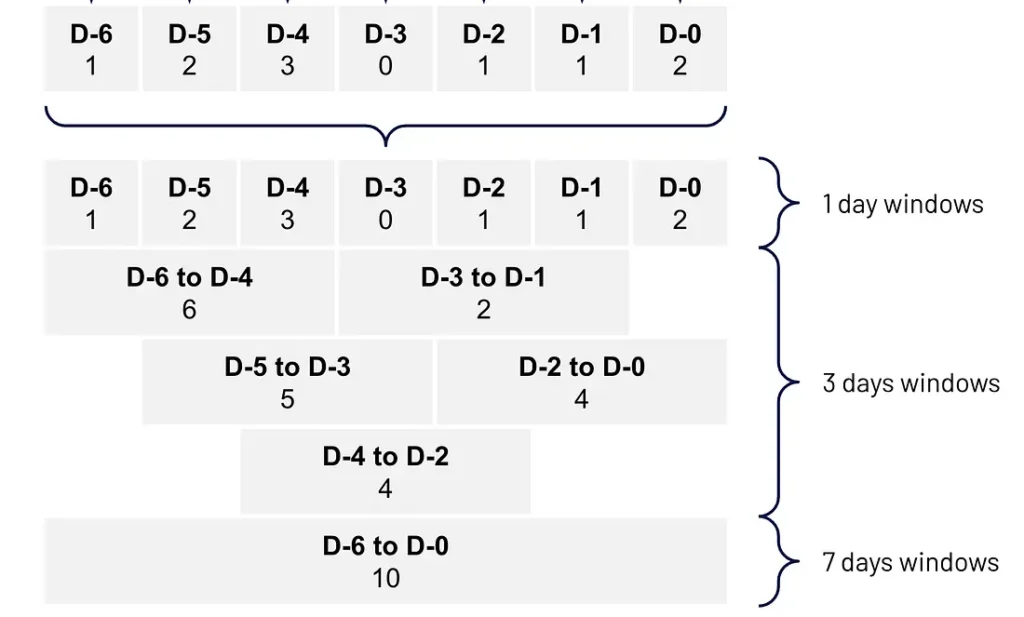

We also noticed that in feature development Data Scientists usually use several similar features, varying only the window, for example, order count for the last 7, 30 and 60 days. That’s why we developed what we call two-step aggregation, as can be observed in image 2.

The Two Step Aggregator internally organizes data into smaller windows that we call step. For example, if the final objective is to aggregate data for a month, the step can be one day. This approach allows the results of these small segments to be stored as intermediate states, which are essentially partial results that will be used to create any larger windows.

Once intermediate states are stored, we combine these results to produce the final aggregation. For example, the results of each daily “window step” would be combined to produce the aggregated monthly result. This step offers some benefits, for example, there is a cost reduction, as it enables us to have multiple windows being processed in the same Spark query execution, something that native Spark does not support today, requiring a dedicated query for each window.

Furthermore, the platform brings streaming as the main focus, and with this we can use the same code base to process both batch and streaming data. That is, everything that works in streaming also works in batch processing.

Finally, each feature update is stored in a Delta Table located in an S3 bucket. Data is structured in transactional format, which means that every time a change is made to the feature, a new entry is recorded in this table. This facilitates the reconstruction of any database at subsequent moments, acting analogously to a transactional log of a database.

Feature Store

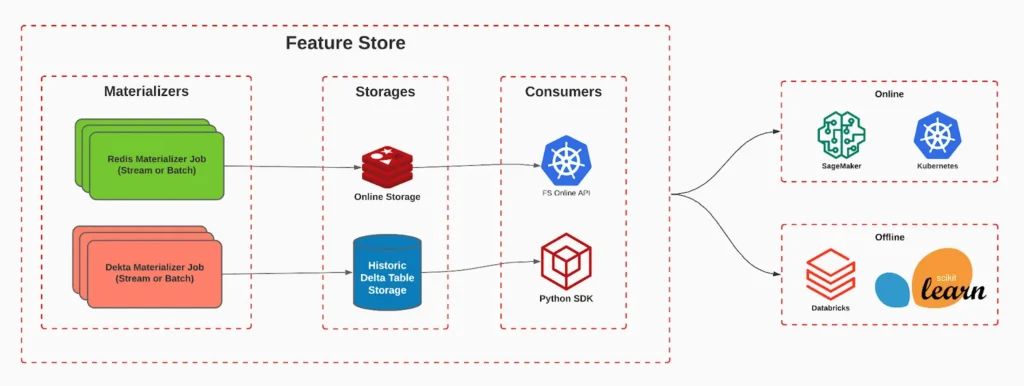

The Feature Store is a central component that serves as a repository for processed features ready for use in machine learning models.

The main advantage of the Feature Store is its ability to reuse and share features across various models and applications, providing consistency and minimizing the need for rework.

The Feature Store can be segmented into two categories, serving distinct needs:

Online Storage

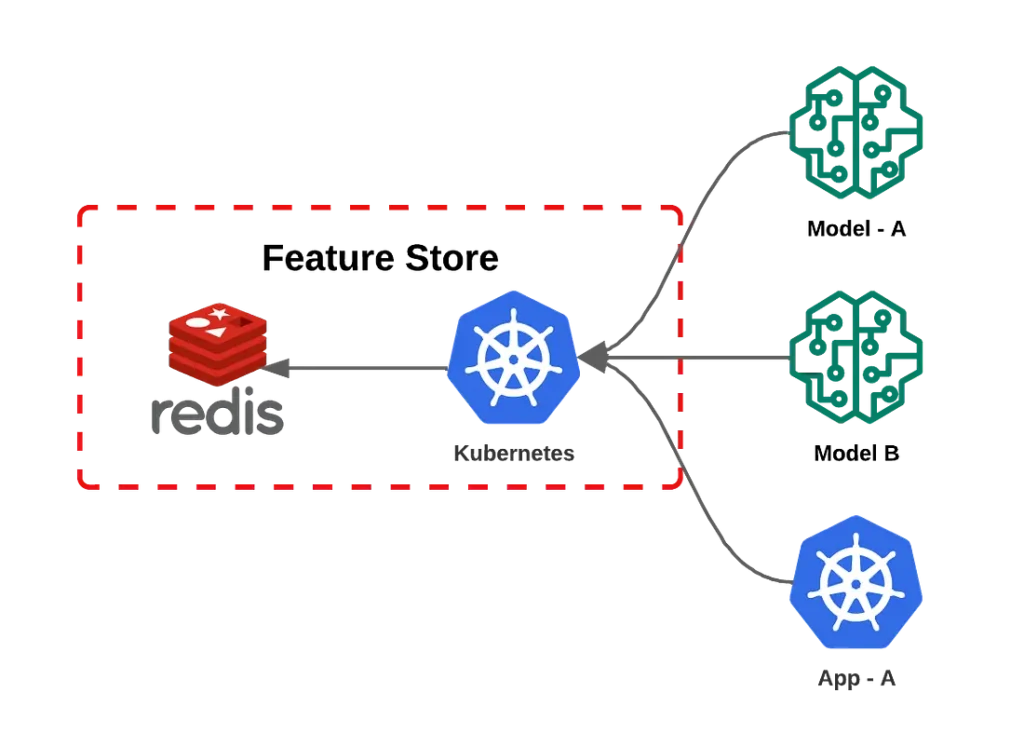

Online storage is designed to be fast and efficient. It is optimized for low-latency queries, making it ideal for real-time applications. At iFood we store features in a Redis, with this we achieve latencies of less than 10 milliseconds for feature retrieval, even with high request volume.

Main Characteristics:

Low Latency: We serve features in less than 10 milliseconds.

Scalability: Combining Redis with an application on Kubernetes, we effortlessly achieve rates of 3 million requests per minute. This solution is quite flexible and scalable, as during low traffic moments unnecessary resources are reallocated, bringing cost optimization.

Usage Examples:

– Dish and restaurant recommendations;

– Segmentations;

– Fraud detection.

Offline Storage

Offline storage, on the other hand, is optimized for capacity and durability. In addition to allowing storage of large volumes of data for long periods of time, it also enables “time travel”, that is, it allows retrieving values of a feature at a certain point in time, necessary for model training. For this we use Delta Table for our offline storage, this brings us great flexibility, mainly for having the possibility to use both streaming and batches to update it.

Main Characteristics:

– Large Capacity: Can store terabytes or even petabytes of data.

– Durability: Based on AWS S3, ensures security and fault resistance.

– Time Travel: Allows accessing features at specific time points efficiently.

Usage Examples:

– Machine learning model training: Models are trained using large datasets for better accuracy.

– Historical analysis: Allows trend analysis over time.

– Batch inference, enabling offline models to perform batch processing efficiently without impacting online flow.

For each type of storage, we use what we call ‘materializers’. These are responsible for reading transactions from the Delta Table and storing them in the appropriate destination. For online storage, we have the ‘Redis Materializer’, which is responsible for saving only the most recent version of the feature in a Redis for quick retrieval. On the other hand, the ‘Delta Materializer’ is responsible for ensuring history consistency, storing it in a Delta Table.

Feature Management

The Feature Engine layer is responsible for calculating the feature, the Feature Store layer is responsible for storing and serving features consistently, but there is still a range of services and applications that are necessary to keep the entire platform healthy and secure. For this we introduce the Feature Management layer, which ensures the integrity, efficiency and security of the features platform, acting as a guardian to ensure that features are generated, stored and accessed appropriately. One of Feature Management’s vital functions is continuous monitoring, which is crucial for maintaining the health of the features platform. Through it, it’s possible to identify problems before they affect end users, optimize platform performance and receive real-time notifications about any irregularities or failures.

In addition to monitoring, Feature Management is responsible for managing the lifecycle of features. This involves determining which features can be deleted, identifying which are frequently accessed and by whom, as well as monitoring costs and warning about features that are not being used. This layer also provides tools to modify or delete features as needed.

Versioning is another crucial function, allowing tracking and managing different versions of features, maintaining a history of all changes and ensuring that models and applications are using the correct version of features. In addition to keeping records of all operations performed, allowing tracking who accessed or modified features. In summary, Feature Management is fundamental to ensure that the features platform operates optimally and securely, meeting user needs.

How the platform solved problems for fraud combat

Although our fraud models already leverage the advantages of Amazon SageMaker, ensuring scalability and resilience, we faced internal challenges related to creating and maintaining features. These costly processes resulted in significant latencies, in addition to the other problems mentioned. But how does the team actually use the features platform in their routine?

The platform provides an SDK that provides facilitating mechanisms for feature retrieval both for training through dataset enrichment, and for inference where it offers an interface for retrieving the latest feature values.

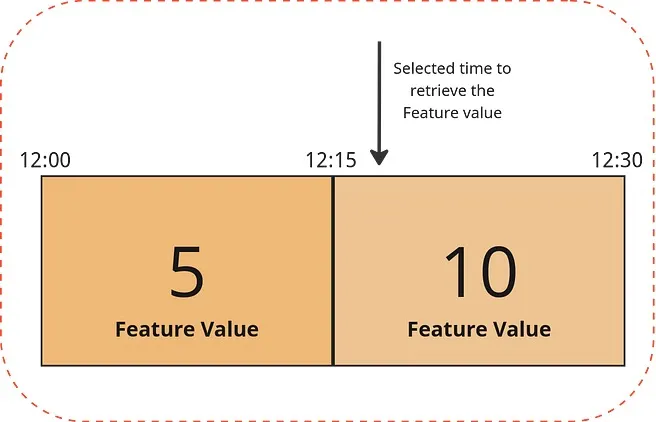

During training, our focus is to extract historical data using a unique identifier and a precise moment in time. To ensure non-exposure of future information, we adopt a closed time window approach. This means that the moment chosen for analysis must always be after the end of this window. For example, if we select a period after 12:15, we get the value 5. If we opt for a moment after 12:30, the value will be 10, and so on, as illustrated in image 5.

The final product of this process is a Spark dataframe, where each characteristic is converted into a distinct column. Our platform ensures correct data aggregation at the appropriate moment, eliminating risks such as future data leakage or data inconsistencies.

In the training context, data accuracy is our main concern, without the need to focus on latency. However, during the inference phase, latency assumes a crucial role. To meet this demand, we offer a REST API specialized in feature retrieval, which is highly scalable and capable of processing millions of requests per minute. During inference, we only need the identifier to access the most recent data. With this, each model accesses the features platform through the API retrieving all features necessary for its inference stage and then performs its prediction.

With this approach, we achieved a significant improvement in the efficiency of our models’ inference, reducing latency in feature retrieval from 250ms to 50ms. This optimization not only enables scientists to explore new possibilities, but also ensures an exceptional experience for iFood users.

Conclusion

The adoption of the Features Platform was crucial for iFood’s fraud team. Replacing manual and time-consuming processes, the team now has an automated and optimized solution. This platform not only simplified feature management, but also addressed and solved latency problems, reducing latency by approximately five times.

In addition to impacting processing speed, the platform also boosted collaboration and efficiency within the team. By treating features as code and offering a standardized library, reuse and discovery of features between teams was encouraged. This enabled data scientists to concentrate their efforts on creating and optimizing models, accelerating innovation.

The Features Platform, by reducing operational load and improving latency, elevated the fraud team’s performance standards. However, the benefits were not restricted to just this team. Today, the platform serves more than one billion daily requests, covering more than 800 features, and is used by teams like recommendation, logistics, marketing, among others, consolidating its value at iFood.

Article written by Willian Moreira

Build the future at iFood

We are always looking for passionate developers, designers and data scientists to help us revolutionize the food delivery experience. Join iFood Tech and be part of building the future of food technology.

Discover our CareersYou might also like to read

Read more about Dados & IA

How iFood’s generative AI connects personalization and communication through rigorous engineering patterns to scale unique user experiences

How iFood's generative AI connects personalization and communication through rigorous engineering patterns to scale unique user experiences From Grouped Campaigns to Individual Decisions [4:30 PM, Tuesday] Haven't had lunch yet, right? The Mushroom Risotto you love is waiting for you…

Yggdrasil: Scaling Authorization at iFood with Cedar and a Stateless Architecture

In a microservices ecosystem as dynamic and complex as iFood's, ensuring that people and systems have appropriate access to the right resources is a constant challenge. Historically, in most cases, authorization logic is implemented in a decentralized and inconsistent manner…

How did iFood manage to identify duplicate tables in the Data Lake?

iFood stands out as a data-driven organization, where product developments and strategic decision-making are grounded in data. To sustain this standard of excellence, it is essential to provide engineers, analysts and scientists with the necessary autonomy to create and manage…

Meet other Authors

Each article is the result of the vision and expertise of our authors. See who contributes to our blog: